By Clixtell Content Team | April 19, 2026

Estimated reading time: 8 minutes

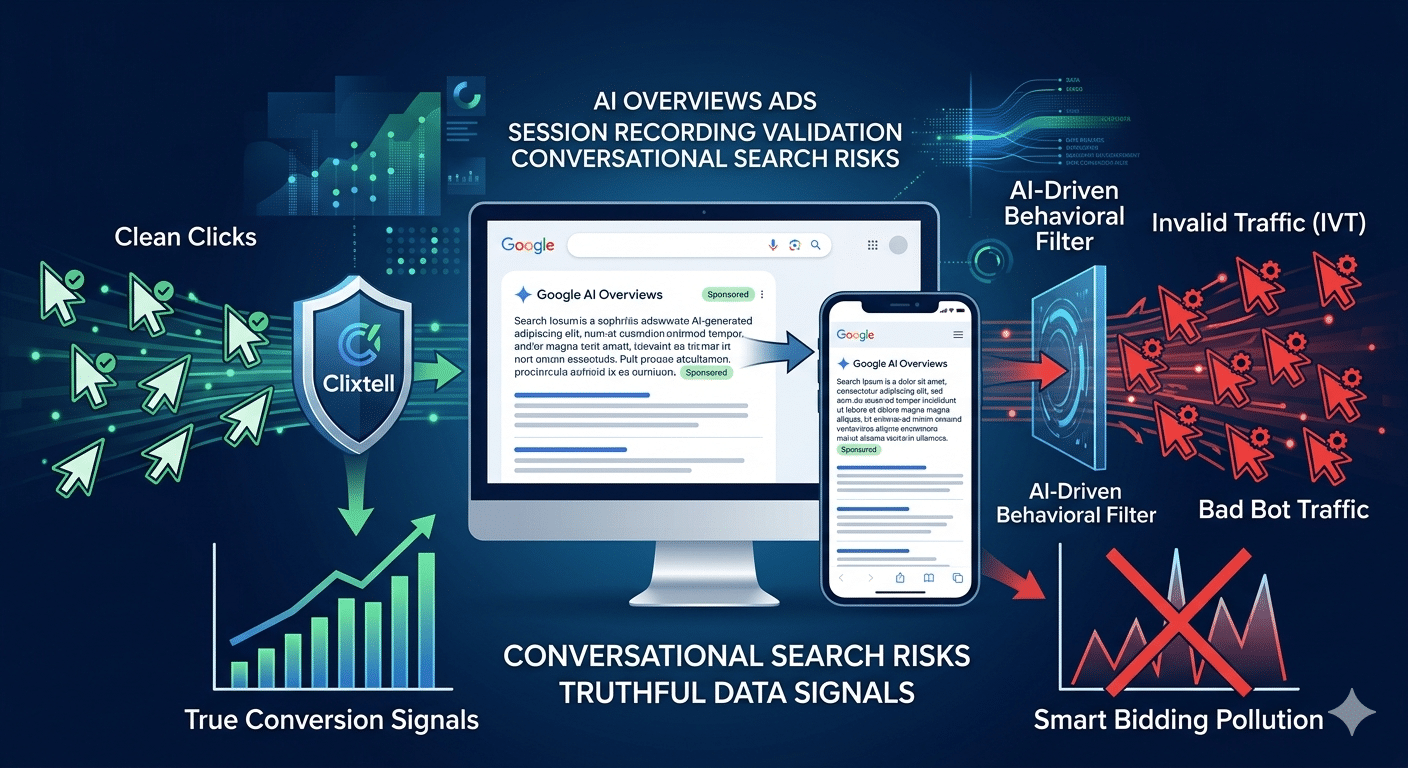

AI Search Ads and Click Fraud: What PPC Teams Can Actually Verify in 2026

Search behavior is changing fast. Google now shows ads in AI Overviews on mobile and desktop in multiple countries. Google also says existing Search, Shopping, and Performance Max campaigns can become eligible automatically. In the U.S., Google is testing ads in AI Mode. OpenAI is also testing clearly labeled ads in ChatGPT for Free and Go users in a phased rollout. For PPC teams, this means discovery is becoming more conversational, more dynamic, and harder to judge with old reporting habits alone.

This topic also needs a clear boundary. SearchGPT was a temporary prototype that OpenAI planned to fold into ChatGPT. It is not a mature standalone ad platform. OpenAI’s own documentation says ChatGPT ads appear below the end of a response. They are clearly labeled and visually separated from the answer itself. So the real 2026 risk is not that every AI answer has become a hidden ad slot. The real risk is that AI search expands how platforms interpret intent, opens more exploratory commercial moments, and reduces some of the placement clarity advertisers once relied on.

What changed in AI search advertising

Google’s AI Overviews are now a real ad surface. Google Ads Help says ads in AI Overviews are available in English on mobile and desktop in countries including the U.S., Canada, Australia, India, and others. Google can serve those ads from existing Search, Shopping, and Performance Max campaigns. It also uses both the user’s query and the AI Overview content to determine relevance.

This shift changes both eligibility and placement. Advertisers cannot target only AI Overviews. They also cannot opt out of serving there. Google Ads still does not provide segmented reporting for AI Overview placements. So the ad surface is real, but the reporting remains limited. That measurement gap appears before most advertisers even think about fraud.

AI Mode pushes this further. Google describes AI Mode as a more advanced search experience for deeper questions and follow-up conversations. Under the hood, it uses a query fan-out method that runs multiple searches on a user’s behalf. Google Ads Help also says ads in AI Mode are being tested in the U.S. When relevant, those ads may appear below and inside AI Mode responses. As a result, the path from question to click becomes more conversational and less linear than classic search.

OpenAI’s model is narrower. Its Help Center says ads in ChatGPT are rolling out in the U.S., Australia, New Zealand, and Canada. Those ads may appear for Free and Go users, while Plus, Pro, Business, Enterprise, and Edu plans do not have ads. OpenAI also says ads do not influence answers. Instead, the company shows them below the response as clearly labeled sponsored placements. Marketers should stop describing this as “SearchGPT ads” or as sponsored citations inside the answer itself.

Where the real risk starts

The automation problem is already here

The fraud risk is real because the web is already saturated with automation. Imperva’s 2025 Bad Bot Report says automated traffic accounted for 51% of all web traffic in 2024, while bad bots alone made up 37%. The same report highlights growing browser impersonation and easier access to AI-assisted automation. Those changes help attackers look more like normal users.

Google’s own invalid traffic definition is also broader than many advertisers assume. The company includes accidental clicks, manual clicks meant to raise costs, automated tools, bots, spiders, crawlers, deceptive software, known invalid data-center traffic, and irregular patterns identified through monitoring. Google also says advertisers do not pay for invalid clicks or impressions that its systems detect. If its systems detect them later, Google may issue credits or adjustments.

Why filtering alone is not enough

That is useful, but billing protection and traffic-quality validation are not the same thing. A platform may filter some invalid clicks. That alone does not tell you whether the visits that reach your site are commercially useful. It also does not tell you whether lead quality is stable or whether your conversion signals are clean enough to guide Smart Bidding in the right direction. In AI search, that distinction matters even more because matching can widen faster than your reporting can explain.

How AI search widens exposure

AI search increases that pressure in a few specific ways. In AI Overviews, Google says ads can show when commercial intent exists and when the ad is relevant to both the query and the AI Overview content. In AI Max for Search, Google says search term matching expands reach through broad match and keywordless technology. Final URL expansion can also send users to the page predicted to perform best. That can unlock real new demand. It can also widen the surface where irrelevant, low-intent, or manipulated traffic lands.

This is why dashboards can mislead teams in 2026. If you cannot isolate AI Overview traffic in segmented reporting, and if AI-assisted matching broadens who sees your ads, you often discover the problem only after post-click quality drops. By that point, the account may already be training on weaker traffic, weaker leads, or weaker engagement patterns.

Why old click fraud logic breaks here

IP blocking still matters. It is just not enough on its own. Modern bad traffic can rotate IPs, spread activity across infrastructure, imitate ordinary browsing, and hide inside sessions that look acceptable at the chart level. MRC still maintains formal invalid traffic standards, including guidance around sophisticated invalid traffic, because the problem is no longer limited to obvious duplicate clicks.

That is exactly why the old rule set fails. A rule like “same IP clicked twice” can catch loud abuse. It does not reliably catch the visitor who lands on a page from an exploratory AI search journey, moves just enough to look normal, triggers a soft conversion, and quietly pollutes the account. In conversational search, weak traffic does not always look explosive. Quite often, it looks plausible.

What a defensible workflow looks like now

Start with session-level validation

The first layer is session-level validation. Clixtell’s recorder captures actual mouse movements, clicks, scroll activity, and form interactions. That gives PPC teams a way to inspect what happened after the click instead of guessing from CPC, CTR, bounce rate, or average session duration alone. Clixtell’s own session-recording material makes the same point clearly. Many suspicious visits look normal in dashboards until you watch how they behave.

Look for patterns across visits

The second layer is pattern analysis across visits, not one suspicious session at a time. Clixtell’s traffic-quality content describes repeat-pattern clustering across IPs, IP ranges, and ASNs, along with VPN and proxy checks, device fingerprinting, and automated exclusions. That matters in AI search because weak traffic may not repeat from one visible source. In many cases, the pattern appears only after you group behavior across many visits.

Protect the conversion signal

The third layer is conversion protection. AI-assisted traffic is not always fraudulent. Some of it is early-stage research traffic. Some of it is curiosity. Some of it reflects poor-fit commercial discovery. The job is not to label everything as fraud. The job is to stop low-quality patterns from being mistaken for successful traffic. That means auditing which conversions count, which lead events are too easy to trigger, and whether sales teams send enough feedback to separate real opportunity from junk.

Keep evidence you can defend

The fourth layer is evidence you can defend. Google says late-detected invalid traffic can appear as credits or adjustments when appropriate. Still, advertisers often need more than that. They need to make internal decisions, explain waste to a client, or build a stronger case around traffic quality. Visible session behavior and repeat-pattern evidence are far more useful than a vague suspicion that “the clicks feel wrong.”

What PPC teams should watch first

Start with the patterns that are most likely to get missed in AI search environments:

- Sessions from complex, answer-seeking queries that arrive with almost no exploration

- Repeated visits from changing IPs but highly similar behavior

- Lead submissions with no meaningful reading, no hesitation, and no buyer-like navigation

- Sudden increases in low-engagement traffic after broad-match expansion, AI Max rollout, or Performance Max changes

- Traffic that looks valid enough in reports but collapses in downstream lead quality

Not every one of these patterns is fraud. Still, each one gives you a reason to validate behavior before you trust the traffic.

FAQ

Are ChatGPT ads embedded inside the answer itself?

No. OpenAI says ads in ChatGPT appear below the end of a response, are clearly labeled as sponsored, and are visually separated from the response. OpenAI also says ads do not influence the answers ChatGPT gives.

Can I opt out of ads in Google AI Overviews?

No. Google Ads Help says advertisers cannot directly target only AI Overviews, cannot opt out of serving there, and do not currently get segmented reporting specifically for AI Overview placements.

Is Google charging for fake clicks in AI search?

Google says advertisers are not charged for invalid clicks or impressions that its systems detect, and later detections can appear as credits or adjustments. The practical problem for advertisers is broader than billing alone. You still need to verify whether the traffic reaching your site is useful, trustworthy, and clean enough to shape bidding and conversion decisions.

What matters now

In 2026, the smart question is not whether AI search ads are real. They are. Google has introduced ads in AI Overviews and is testing ads in AI Mode. OpenAI is testing a more limited ad model in ChatGPT. The better question is where reporting ends and proof begins. As search becomes more conversational, advertisers need stronger post-click validation, not weaker standards. The teams that win will be the ones that stop guessing from dashboards and start proving traffic quality after the click.

Conversion tracking audits and post-click behavior analysis matter more in this environment because weak traffic can distort bidding decisions long before anyone labels it as invalid.